Know what inputs your models get and how they respond. Make sense of unstructured data to uncover patterns and changes.

GET STARTED

LLMs and NLP models can produce unexpected or incorrect responses, and their quality may decline due to shifts in data and usage patterns. Getting visibility into the real-world model performance is critical to ensure reliable operations.

Evidently extracts interpretable signals from unstructured data, giving a clear view of model inputs, outputs, and how they change. This helps to learn when to label or fine-tune the models, modify prompts, and what behaviors require attention.

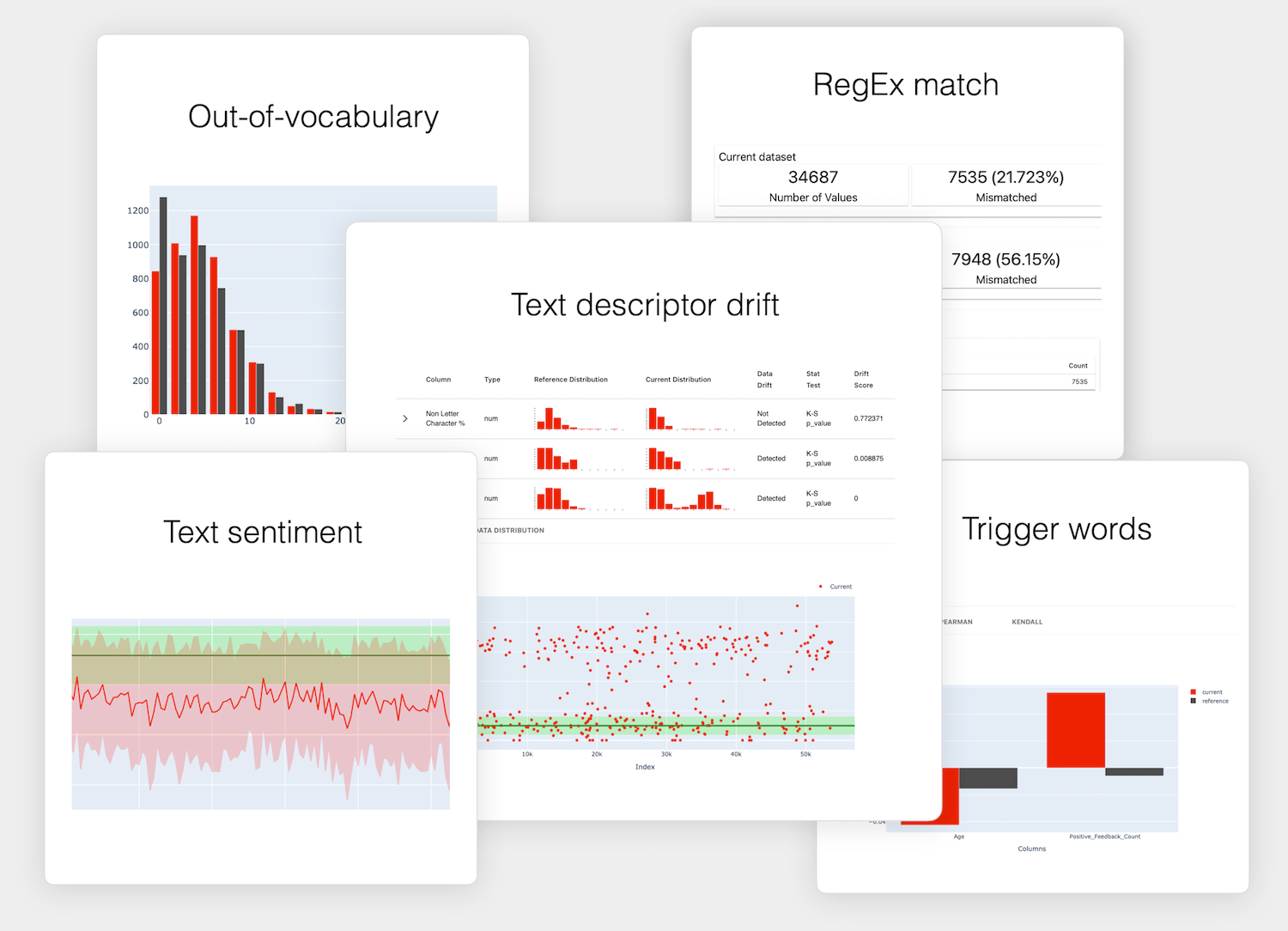

Capture important properties of text data with auto-generated descriptors: from the number of words to text sentiment. Track them over time to detect shifts.

Know if the new data is unlike the old one. Identify the specific words that contributed the most to drift detection results.

Catch changes in embedding distributions. Pick and tune methods, from distance metrics to model-based drift detection.

Check if model inputs or outputs match a regular expression or contain specific words. Track properties over time and monitor compliance.

Evaluate prediction drift to know if things have changed, and it's time to label your data. Quickly visualize model quality whenever you get feedback. Track everything.

Easily add Evidently to existing workflows, no matter where you deploy.